Neutron and SDN

P.S: This picture gives an overall architecture about Neutron and SDN controller that are integrated together.

When an OpenStack user performs any networking related operation (create/update/delete/read on network, subnet and port resources) the typical flow would be as follows:

- The user operation on the OpenStack dashboard (Horizon) will be translated into a corresponding networking API and sent to the Neutron server.

- The Neutron server receives the request and passes the same to the configured plugin (assume ML2 is configured with an ODL mechanism driver and a VXLAN type driver).

- The Neutron server/plugin will make the appropriate change to the DB.

- The plugin will invoke the corresponding REST API to the SDN controller (assume an ODL).

- ODL, upon receiving this request, may perform necessary changes to the network elements using any of the southbound plugins/protocols, such as OpenFlow, OVSDB or OF-Config.

We should note there exists different integration options with the SDN controller and OpenStack; for example:

- one can completely eliminate RPC communications between the Neutron server and agents on the compute node, with the SDN controller being the sole entity managing the network

- or the SDN controller manages only the physical switches, and the virtual switches can be managed from the Neutron server directly.

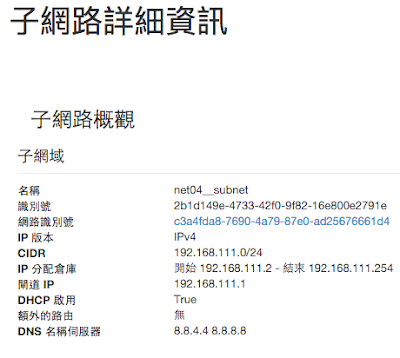

Neutron底層網絡的兩種模型示意如下。

第一種模型a) Neutron相當於SDN控制器,plugin與agent間的通信機制(如rpc)就相當於簡單的南向協議。第二種模型中Neutron作為SDN應用,將業務需求告知SDN控制器,SDN控制器再通過五花八門的南向協議遠程控製網絡設備。

第二種模型b) 也可以把Neutron看做超級控制器或者網絡編排器,去完成OpenStack中網絡業務的集中分發。

Plugin Agent

P.S: This picture shows the process flow between agents, api server and ovs when creating a VM.

http://www.innervoice.in/blogs/wp-content/uploads/2015/03/Plugin-Agents.jpg

http://www.innervoice.in/blogs/wp-content/uploads/2015/03/Plugin-Agents.jpg

About ML2

Neutron plugin体系

How OpenDaylight to integrate with Neutron

How is ONOS to integrate with Neutron

SONA Architecture

onos-networking plugin just forwards (or calls) REST calls from nova to ONOS, and OpenstackSwitching app receives the API calls and returns OK. Main functions to implement the virtual networks are handled in OpenstackSwitching application.

OpenstackSwitching (App on ONOS)

Neutron + SDN Controller (ONOS)

P.S: ONOS provides its ONOSMechanismDriver instead of OpenvswitchMechanismDriver

Reference:

Here is an article to talk about writing a dummy mechanism driver to record variables and data in logs

http://blog.csdn.net/yanheven1/article/details/47357537